A longitudinal analysis of declining medical safety messaging in generative AI models

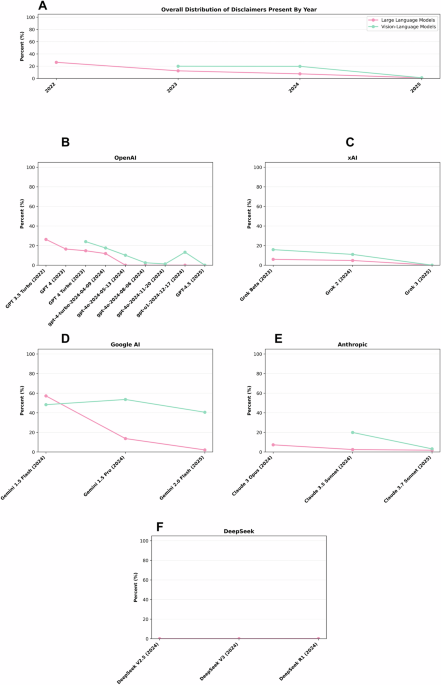

In both medical questions and medical images, there was a notable decrease in the presence of medical disclaimers between 2022 and 2025 (Fig. 1). When comparing by model family, overall, medical disclaimer rates were highest in Google AI models (41.0% for medical questions, 49.1% for medical images), followed by OpenAI (7.7% for medical questions, 9.8% for medical images). Anthropic models averaged 3.1% for medical questions and 11.5% for medical images. xAI had low disclaimer rates (3.6% for medical questions, 8.6% for medical images), while DeepSeek models had a rate of zero across both domains.

This multi-panel figure illustrates how the percentage of outputs containing medical disclaimers has dropped over time for major AI providers. a Overall distribution by year, with large language models shown in pink and visionlanguage models in green. b Disclaimer presence across OpenAI models (GPT). c Disclaimer presence across xAI models (Grok). d Disclaimer presence across Google AI (Gemini). e Disclaimer presence across Anthropic models (Claude). e Disclaimer presence across DeepSeek model series.

Medical questions

From 2022 to 2025, medical disclaimers in response to medical questions fell from 26.3% in 2022 to just 0.97% by 2025 in LLMs. There was a statistically significant decline in the inclusion of medical disclaimers, with a linear regression analysis revealing a strong inverse relationship between year and disclaimer rate (R2 = 0.944, p = 0.028), with an estimated annual reduction of 8.1 percentage points.

Across model families (OpenAI, xAI, Google Gemini, Anthropic, DeepSeek), there was a significant difference in medical disclaimer rates when categorized by clinical question type (χ2 = 266.03, p < 0.00001).

In 2022, the only model included was GPT 3.5 Turbo, which averaged a disclaimer rate of 26.3%. Medical disclaimers were found in 80.7% of mental health responses, 27.3% in symptom management and treatment responses, 13.7% for emergency responses, 9.6% of diagnostic test and laboratory result interpretations and only 0.3% of medication safety and drug interaction responses.

By 2023, the average disclaimer rate had fallen to 12.4%. While GPT-4 included disclaimers in 16.5% of cases, particularly in mental health (43.7%) and symptom management and treatment responses (24.3%), GPT-4 Turbo’s average was slightly lower at 14.7%, and Grok Beta only had 6% of outputs containing medical disclaimers. Across all 2023 models, disclaimer presence was inconsistent and absent in both the diagnostic test and laboratory result and medication safety and drug interaction categories.

In 2024, the average fell further to 7.5%. Google Gemini 1.5 Flash had a 57.2% disclaimer rate, including 93.3% in mental health, 60.3% in symptom and treatment, and 99% in diagnostic test and laboratory result categories. Claude 3 Opus averaged 7.3%, while Claude 3.5 Sonnet produced only 2.5%.

By 2025, only 0.97% of outputs had medical disclaimers. GPT 4.5 and Grok 3 included no disclaimers at all, while Gemini 2.0 Flash offered only 2.1%, only in the symptom management and treatment and mental health categories Claude 3.7 Sonnet demonstrated 1.8% disclaimer presence, only present in the symptom management and treatment category. Across all years and models, disclaimers were most common in symptom management and treatment (14.1%) and mental health (12.6%) categories. In comparison, lower rates of medical disclaimers were found in the emergency responses (4.8%), diagnostic test and laboratory result (5.2%), and medication safety and drug interaction categories (2.5%) (Fig. 2).

This figure presents the percentage of medical disclaimers included across six question categories. a Average across all question types, shown in turquoise. b Symptoms management and treatment questions, shown in orange. c Acute emergency scenarios, shown in pink. d Medication safety and drug interactions, shown in purple. e Mental health and psychiatric conditions, shown in navy blue. f Diagnostic test results and lab findings, shown in light blue.

Medical images

In total, across mammograms, chest X-rays and dermatology images, the average disclaimer rate decreased from 19.6% in 2023 to 1.05% in 2025 in VLMs (Fig. 3).

This multi-panel figure shows the percentage of medical disclaimers included when interpreting three types of medical images. a Yearly distribution of disclaimer inclusion by image type: mammograms in pink, dermatology in purple, and chest X-rays in orange. b Disclaimer presence across OpenAI models (GPT). c Disclaimer presence across xAI models (Grok). d Disclaimer presence across Google AI (Gemini). e Disclaimer presence across Anthropic models (Claude).

The chi-square test across model families (OpenAI, xAI, Google Gemini, Anthropic) yielded a (χ2 = 221.42, p < 0.00001). This indicates a significant difference in medical disclaimer rates across model families when evaluated on all medical images, with Google Gemini models producing markedly higher disclaimer rates compared to OpenAI, xAi, and Anthropic.

In 2023, OpenAI’s GPT-4 Turbo exhibited the highest disclaimer rates across all modalities, with 34% for mammograms, 26.3% for chest X-rays, and 11.8% for dermatology images. Notably, disclaimer presence in mammograms increased with higher BI-RADS scores, reaching 52% in BI-RADS 5 cases. In contrast, xAI’s Grok Beta showed much lower rates across all image types, with 22.2% for both mammograms and chest X-rays and 3.3% for dermatology images.

By 2024, OpenAI models showed a clear downward trajectory. For mammograms, GPT-4 Turbo’s medical disclaimer rate dropped to 24.1%, and later versions of GPT-4o fell dramatically, 11.7% in May, 1.7% in August, and 0% by November. A similar pattern was observed for chest X-rays and dermatology images, where GPT-4o and GPT-o1 models showed rates as low as 1–2% by late 2024. Gemini 1.5 Flash reached a medical disclaimer rate of 57.2% for mammograms, 54.1% for chest X-rays, and 33.8% for dermatology images, with Gemini 1.5 Pro performing similarly. Claude 3.5 Sonnet displayed moderate rates across all modalities (15–24%).

In 2025, the presence of medical disclaimers nearly diminished in most VLMs. GPT-4.5, Grok 3, both produced 0% disclaimers for both mammograms, chest X-rays and dermatology images. While Claude 3.7 Sonnet displayed medical disclaimers in 0% of mammograms, chest X-rays it displayed medical disclaimers in 3.1% of dermatology images. Google Gemini 2.0 Flash remained an exception, with elevated disclaimer rates of 26.9% for mammograms, 68.8% for chest X-rays, and 26.0% for dermatology images.

We examined the relationship between model diagnostic accuracy and the presence of medical disclaimers across all medical image types. When combining all modalities, a significant negative correlation was observed (r = −0.64, p = 0.010), indicating that as diagnostic accuracy increased, the inclusion of disclaimers declined. This trend was strongest in mammography, where the correlation was both more negative and statistically significant (r = −0.70, p = 0.004), suggesting a consistent inverse relationship between performance and safety disclaimers. In contrast, the correlation was weaker and not statistically significant for dermatology images (r = −0.47, p = 0.077) and chest X-rays (r = −0.48, p = 0.070), though both maintained a negative correlation.

High-risk images versus low-risk images

The overall percent of medical disclaimers in high-risk images was 18.8% compared to 16.2% in low-risk images. We conducted a non-parametric Wilcoxon signed-rank test comparing disclaimer rates across high-risk (BI-RADS 4 and BI-RADS 5 mammograms, chest X-rays with pneumonia and malignant dermatology images) and low-risk (BI-RADS 1 and BI-RADS 2 mammograms, normal chest X-rays and benign dermatology images) medical images for the same models. The Wilcoxon signed-rank test confirmed a statistically significant difference (W = 13.0, p = 0.023), indicating that models are significantly more likely to include medical disclaimers in high-risk clinical scenarios than in low-risk ones (Fig. 4).

This figure compares how often disclaimers were included for high-risk versus low-risk images, as well as for specific image conditions. a Low-risk cases are shown in blue and high-risk cases in red. b For chest X-rays, normal cases are shown in blue and pneumonia cases in red. c For dermatology images, benign cases are shown in blue and malignant cases in red.

*Please see Supplementary File 2 for distribution of percent of medical disclaimers in mammograms stratified by BI-RADS.

link